After an upgrade of a vCenter Server system to version 6.7 Update 2, pre-upgrade First Class Disks (FCD) might not be listed in the Global Catalog. During an upgrade of your vCenter Server system to version 6.7 Update 2, the FCD Global Catalog might pick an ESXi host that is not yet updated to invoke a sync and the sync fails.

| Developer(s) | VMware, Inc. |

|---|---|

| Initial release | March 23, 2001; 18 years ago |

| Stable release | |

| Platform | IA-32 (x86-32) (discontinued in 4.0 onwards),[1]x86-64 |

| Type | Native hypervisor (type 1) |

| License | Proprietary |

| Website | www.vmware.com/products/esxi-and-esx.html |

VMware ESXi (formerly ESX) is an enterprise-class, type-1hypervisor developed by VMware for deploying and servingvirtual computers. As a type-1 hypervisor, ESXi is not a software application that is installed on an operating system (OS); instead, it includes and integrates vital OS components, such as a kernel.[2]

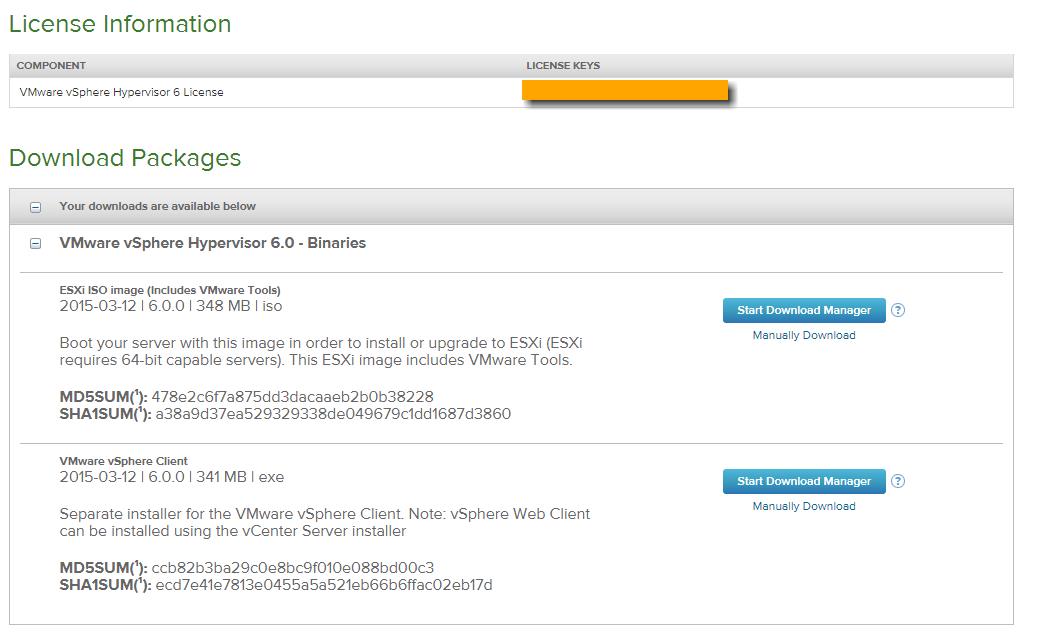

- Understanding the VMware ESXi limitations of the free Version. Ask Question Asked 3 years, 10 months ago. Active 1 year, 6 months ago. Viewed 125k times 17. I am running an ESXi Vsphere Client Version 6.0.0 but with all the different documentation and changes I have trouble to understand my limitations. Free version Hypervisor (Esxi.

- VMware ESXi, a smaller-footprint version of ESX, does not include the ESX Service Console. It is available - without the need to purchase a vCenter license - as a free download from VMware, with some features disabled. ESXi apparently stands for 'ESX integrated'. VMware ESXi originated as a compact version of VMware ESX that allowed for a.

- Before using a free version of ESXi hypervisor, we need to know its limitations. With the free version of ESXi we don’t get the vendor support and lost the ability to manage ESXi using vCenter. When you activate ESXi with free ESXi license you will not be able to add ESXi server to vCenter.

After version 4.1 (released in 2010), VMware renamed ESX to ESXi. ESXi replaces Service Console (a rudimentary operating system) with a more closely integrated OS. ESX/ESXi is the primary component in the VMware Infrastructuresoftware suite.[3]

The name ESX originated as an abbreviation of Elastic Sky X.[4][5] In September 2004, the replacement for ESX was internally called VMvisor, but later changed to ESXi (as the 'i' in ESXi stood for 'integrated').[6][7]

- 1Architecture

- 4Related or additional products

- 5Known limitations

Architecture[edit]

ESX runs on bare metal (without running an operating system)[8] unlike other VMware products.[9] It includes its own kernel: A Linux kernel is started first,[10] and is then used to load a variety of specialized virtualization components, including ESX, which is otherwise known as the vmkernel component.[11] The Linux kernel is the primary virtual machine; it is invoked by the service console. At normal run-time, the vmkernel is running on the bare computer, and the Linux-based service console runs as the first virtual machine. VMware dropped development of ESX at version 4.1, and now uses ESXi, which does not include a Linux kernel.[12]

The vmkernel is a microkernel[13] with three interfaces: hardware, guest systems, and the service console (Console OS).

Interface to hardware[edit]

The vmkernel handles CPU and memory directly, using scan-before-execution (SBE) to handle special or privileged CPU instructions[14][15]and the SRAT (system resource allocation table) to track allocated memory.[16]

Access to other hardware (such as network or storage devices) takes place using modules. At least some of the modules derive from modules used in the Linux kernel. To access these modules, an additional module called vmklinux implements the Linux module interface. According to the README file, 'This module contains the Linux emulation layer used by the vmkernel.'[17]

The vmkernel uses the device drivers:[17]

- net/e100

- net/e1000

- net/e1000e

- net/bnx2

- net/tg3

- net/forcedeth

- net/pcnet32

- block/cciss

- scsi/adp94xx

- scsi/aic7xxx

- scsi/aic79xx

- scsi/ips

- scsi/lpfcdd-v732

- scsi/megaraid2

- scsi/mptscsi_2xx

- scsi/qla2200-v7.07

- scsi/megaraid_sas

- scsi/qla4010

- scsi/qla4022

- scsi/vmkiscsi

- scsi/aacraid_esx30

- scsi/lpfcdd-v7xx

- scsi/qla2200-v7xx

These drivers mostly equate to those described in VMware's hardware compatibility list.[18] All these modules fall under the GPL. Programmers have adapted them to run with the vmkernel: VMware Inc has changed the module-loading and some other minor things.[17]

Service console[edit]

In ESX (and not ESXi), the Service Console is a vestigial general purpose operating system most significantly used as bootstrap for the VMware kernel, vmkernel, and secondarily used as a management interface. Both of these Console Operating System functions are being deprecated from version 5.0, as VMware migrates exclusively to the ESXi model.[19]The Service Console, for all intents and purposes, is the operating system used to interact with VMware ESX and the virtual machines that run on the server.

Purple Screen of Death[edit]

In the event of a hardware error, the vmkernel can catch a Machine Check Exception.[20] This results in an error message displayed on a purple diagnostic screen. This is colloquially known as a purple diagnostic screen, or purple screen of death (PSoD, cf. Blue Screen of Death (BSoD)).

Upon displaying a purple diagnostic screen, the vmkernel writes debug information to the core dump partition. This information, together with the error codes displayed on the purple diagnostic screen can be used by VMware support to determine the cause of the problem.

Versions[edit]

VMware ESX is available in two main types: ESX and ESXi, although since version 5 only ESXi is continued.

ESX and ESXi before version 5.0 do not support Windows 8/Windows 2012. These Microsoft operating systems can only run on ESXi 5.x or later.[21]

VMware ESXi, a smaller-footprint version of ESX, does not include the ESX Service Console. It is available - without the need to purchase a vCenter license - as a free download from VMware, with some features disabled.[22][23][24]

ESXi apparently stands for 'ESX integrated'.[25]

VMware ESXi originated as a compact version of VMware ESX that allowed for a smaller 32 MB disk footprint on the host. With a simple configuration console for mostly network configuration and remote based VMware Infrastructure Client Interface, this allows for more resources to be dedicated to the guest environments.

Two variations of ESXi exist:

- VMware ESXi Installable

- VMware ESXi Embedded Edition

The same media can be used to install either of these variations depending on the size of the target media.[26] One can upgrade ESXi to VMware Infrastructure 3[27]or to VMware vSphere 4.0 ESXi.

Originally named VMware ESX Server ESXi edition, through several revisions the ESXi product finally became VMware ESXi 3. New editions then followed: ESXi 3.5, ESXi 4, ESXi 5 and (as of 2015) ESXi 6.

Free Verse Poems

GPL violation lawsuit[edit]

VMware has been sued by Christoph Hellwig, a Linux kernel developer, for GPL license violations. It was alleged that VMware had misappropriated portions of the Linux kernel,[28] and used them without permission. The lawsuit was dismissed by the court in July 2016[29] and Hellwig announced he would file an appeal.[30]

The appeal was decided February 2019 and again dismissed by the German court, on the basis of not meeting 'procedural requirements for the burden of proof of the plaintiff'.[31]

Related or additional products[edit]

The following products operate in conjunction with ESX:

- vCenter Server, enables monitoring and management of multiple ESX, ESXi and GSX servers. In addition, users must install it to run infrastructure services such as:

- vMotion (transferring virtual machines between servers on the fly whilst they are running, with zero downtime)[32][33]

- svMotion aka Storage vMotion (transferring virtual machines between Shared Storage LUNs on the fly, with zero downtime)[34]

- enhanced vMotion aka evMotion (a simultaneous vMotion and svMotion, supported on version 5.1 and above)

- Distributed Resource Scheduler (DRS) (automated vMotion based on host/VM load requirements/demands)

- High Availability (HA) (restarting of Virtual Machine Guest Operating Systems in the event of a physical ESX Host failure)

- Fault Tolerance (almost instant stateful fail-over of a VM in the event of a physical host failure)[35]

- Converter, enables users to create VMware ESX Server- or Workstation-compatible virtual machines from either physical machines or from virtual machines made by other virtualization products. Converter replaces the VMware 'P2V Assistant' and 'Importer' products — P2V Assistant allowed users to convert physical machines into virtual machines; and Importer allowed the import of virtual machines from other products into VMware Workstation.

- vSphere Client (formerly VMware Infrastructure Client), enables monitoring and management of a single instance of ESX or ESXi server. After ESX 4.1, vSphere Client was no longer available from the ESX/ESXi server, but must be downloaded from the VMware web site.

Cisco Nexus 1000v[edit]

Network-connectivity between ESX hosts and the VMs running on it relies on virtual NICs (inside the VM) and virtual switches. The latter exists in two versions: the 'standard' vSwitch allowing several VMs on a single ESX host to share a physical NIC and the 'distributed vSwitch' where the vSwitches on different ESX hosts together form one logical switch. Cisco offers in their Cisco Nexus product-line the Nexus 1000v, an advanced version of the standard distributed vSwitch. A Nexus 1000v consists of two parts: a supervisor module (VSM) and on each ESX host a virtual ethernet module (VEM). The VSM runs as a virtual appliance within the ESX cluster or on dedicated hardware (Nexus 1010 series) and the VEM runs as module on each host and replaces a standard dvS (distributed virtual switch) from VMware.Configuration of the switch is done on the VSM using the standard NX-OSCLI. It offers capabilities to create standard port-profiles which can then be assigned to virtual machines using vCenter.

Vcenter Free Version Windows 7

There are several differences between the standard dvS and the N1000v; one is that the Cisco switch generally has full support for network technologies such as LACP link aggregation or that the VMware switch supports new features such as routing based on physical NIC load. However the main difference lies in the architecture: Nexus 1000v is working in the same way as a physical Ethernet switch does while dvS is relying on information from ESX. This has consequences for example in scalability where the Kappa limit for a N1000v is 2048 virtual ports against 60000 for a dvS. The Nexus1000v is developed in co-operation between Cisco and VMware and uses the API of the dvS[36]

Third party management tools[edit]

Because VMware ESX is a leader in the server-virtualisation market,[37] software and hardware vendors offer a range of tools to integrate their products or services with ESX. Examples are the products from Veeam Software with backup and management applications[38] and a plugin to monitor and manage ESX using HP OpenView,[39]Quest Software with a range of management and backup-applications and most major backup-solution providers have plugins or modules for ESX. Using Microsoft Operations Manager (SCOM) 2007/2012 with a Bridgeways ESX management pack gives you a realtime ESX datacenter health view.

Also, hardware-vendors such as Hewlett-Packard and Dell include tools to support the use of ESX(i) on their hardware platforms. An example is the ESX module for Dell's OpenManage management platform.[40]

VMware has added a Web Client[41] since v5 but it will work on vCenter only and does not contain all features.[42] vEMan[43] is a Linux application which is trying to fill that gap. These are just a few examples: there are numerous 3rd party products to manage, monitor or backup ESX infrastructures and the VMs running on them.[44]

Known limitations[edit]

Known limitations of VMware ESXi, as of Sept 2019, include the following:

Infrastructure limitations[edit]

Some maximums in ESXi Server 6.7 may influence the design of data centers:[45]

- Guest system maximum RAM: 6128 GB

- Host system maximum RAM: 16 TB

- Number of hosts in a high availability or Distributed Resource Scheduler cluster: 64

- Maximum number of processors per virtual machine: 256

- Maximum number of processors per host: 768

- Maximum number of virtual CPUs per physical CPU core: 32

- Maximum number of virtual machines per host: 1024

- Maximum number of virtual CPUs per fault tolerant virtual machine: 4

- Maximum guest system RAM per fault tolerant virtual machine: 128 GB

- VMFS5 maximum volume size: 64 TB, but maximum file size is 62 TB -512 bytes

Performance limitations[edit]

In terms of performance, virtualization imposes a cost in the additional work the CPU has to perform to virtualize the underlying hardware. Instructions that perform this extra work, and other activities that require virtualization, tend to lie in operating system calls. In an unmodified operating system, OS calls introduce the greatest portion of virtualization 'overhead'.[citation needed]

Paravirtualization or other virtualization techniques may help with these issues. VMware developed the Virtual Machine Interface for this purpose, and selected operating systems currently support this. A comparison between full virtualization and paravirtualization for the ESX Server[46] shows that in some cases paravirtualization is much faster.

Network limitations[edit]

When using the advanced and extended network capabilities by using the Cisco Nexus 1000v distributed virtual switch the following network-related limitations apply:[36]

- 64 ESX/ESXi hosts per VSM (Virtual Supervisor Module)

- 2048 virtual ethernet interfaces per VMware vDS (virtual distributed switch)

- and a maximum of 216 virtual interfaces per ESX/ESXi host

- 2048 active VLAN's (one to be used for communication between VEM's and VSM)

- 2048 port-profiles

- 32 physical NIC's per ESX/ESXi (physical) host

- 256 port-channels per VMware vDS (virtual distributed switch)

- and a maximum of 8 port-channels per ESX/ESXi host

Fibre Channel Fabric limitations[edit]

Regardless of the type of virtual SCSI adapter used, there are these limitations:[47]

- Maximum of 4 Virtual SCSI adapters, one of which should be dedicated to virtual disk use

- Maximum of 64 SCSI LUNs per adapter

See also[edit]

- KVM Linux Kernel-based Virtual Machine – an open source hypervisor platform

- Hyper-V – a competitor of VMware ESX from Microsoft

- Xen – an open source hypervisor platform

References[edit]

- ^'VMware ESX 4.0 only installs and runs on servers with 64bit x86 CPUs. 32bit systems are no longer supported'. VMware, Inc.

- ^'ESX Server Architecture'. Vmware.com. Archived from the original on 7 November 2009. Retrieved 22 October 2009.

- ^VMware:vSphere ESX and ESXi Info Center

- ^'What does ESX stand for?'. Archived from the original on 20 December 2014. Retrieved 3 October 2014.

- ^'Glossary'(PDF). Developer’s Guide to Building vApps and Virtual Appliances: VMware Studio 2.5. Palo Alto: VMware. 2011. p. 153. Retrieved 9 November 2011.

- ^'Did you know VMware Elastic Sky X (ESX) was once called 'Scaleable Server'?'. UP2V. 12 May 2014. Retrieved 9 May 2018.

- ^'VMware ESXi was created by a French guy !!! | ESX Virtualization'. ESX Virtualization. 26 September 2009. Retrieved 9 May 2018.

- ^'ESX Server Datasheet'

- ^'ESX Server Architecture'. Vmware.com. Archived from the original on 29 September 2007. Retrieved 1 July 2009.

- ^'ESX machine boots'. Video.google.com.au. 12 June 2006. Retrieved 1 July 2009.

- ^'VMKernel Scheduler'. vmware.com. Retrieved 10 March 2016.

- ^Mike, Foley. 'It's a Unix system, I know this!'. VMware Blogs. VMware.

- ^'Support for 64-bit Computing'. Vmware.com. 19 April 2004. Archived from the original on 2 July 2009. Retrieved 1 July 2009.

- ^Gerstel, Markus: 'Virtualisierungsansätze mit Schwerpunkt Xen'Archived 10 October 2013 at the Wayback Machine

- ^VMware ESX

- ^'VMware ESX Server 2: NUMA Support'(PDF). Palo Alto, California: VMware Inc. 2005. p. 7. Retrieved 29 March 2011.

SRAT (system resource allocation table) – table that keeps track of memory allocated to a virtual machine.

- ^ abc'ESX Server Open Source'. Vmware.com. Retrieved 1 July 2009.

- ^'ESX Hardware Compatibility List'. Vmware.com. 10 December 2008. Retrieved 1 July 2009.

- ^'ESXi vs. ESX: A comparison of features'. Vmware, Inc. Retrieved 1 June 2009.

- ^'KB: Decoding Machine Check Exception (MCE) output after a purple diagnostic screen |publisher=VMware, Inc.'

- ^VMware KBArticle Windows 8/Windows 2012 doesn't boot on ESX, visited 12 September 2012

- ^'Download VMware vSphere Hypervisor (ESXi)'. www.vmware.com. Retrieved 22 July 2014.

- ^'Getting Started with ESXi Installable'(PDF). VMware. Retrieved 22 July 2014.

- ^'VMware ESX and ESXi 4.1 Comparison'. Vmware.com. Retrieved 9 June 2011.

- ^'What do ESX and ESXi stand for?'. VM.Blog. 31 August 2011. Retrieved 21 June 2016.

Apparently, the 'i' in ESXi stands for Integrated, probably coming from the fact that this version of ESX can be embedded in a small bit of flash memory on the server hardware.

- ^Andreas Peetz. 'ESXi embedded vs. ESXi installable FAQ'. Retrieved 11 August 2014.

- ^'Free VMware ESXi: Bare Metal Hypervisor with Live Migration'. Vmware.com. Retrieved 1 July 2009.

- ^'Conservancy Announces Funding for GPL Compliance Lawsuit'. sfconservancy.org. 5 March 2015. Retrieved 27 August 2015.

- ^'German court ruling'(PDF). 8 July 2016.

- ^'Hellwig To Appeal VMware Ruling After Evidentiary Set Back in Lower Court'. 9 August 2016.

- ^'Klage von Hellwig gegen VMware erneut abgewiesen'. 1 March 2019.

- ^VMware Blog by Kyle Gleed: vMotion: what's going on under the covers, 25 February 2011, visited: 2 February 2012

- ^VMware website vMotion brochure. Retrieved 3 February 2012

- ^http://www.vmware.com/files/pdf/VMware-Storage-VMotion-DS-EN.pdf

- ^http://www.vmware.com/files/pdf/VMware-Fault-Tolerance-FT-DS-EN.pdf

- ^ abOverview of the Nexus 1000v virtual switch, visited 9 July 2012

- ^VMware continues virtualization market romp, 18 April 2012. Visited: 9 July 2012

- ^About Veeam, visited 9 July 2012

- ^Veeam OpenView plugin for VMware, visited 9 July 2012

- ^OpenManage (omsa) support for ESXi 5.0, visited 9 July 2012

- ^VMware info about Web Client – VMware ESXi/ESX 4.1 and ESXi 5.0 Comparison

- ^Availability of vSphere Client for Linux systems – What the web client can do and what not

- ^vEMan website vEMan – Linux vSphere client

- ^Petri website 3rd party ESX tools, 23 December 2008. Visited: 11 September 2001

- ^'Configuration Maximums'(HTML). VMware, Inc. 2019. Retrieved 5 September 2019.

- ^'Performance of VMware VMI'(PDF). VMware, Inc. 13 February 2008. Retrieved 22 January 2009.

- ^'vSphere 6.7 Configuration Maximums'. VMware Configuration Maximum Tool. VMware. Retrieved 12 July 2019.

External links[edit]

I am running an ESXi Vsphere Client Version 6.0.0 but with all the different documentation and changes I have trouble to understand my limitations.From the official documentation I see that my limit for physical CPU should be unlimited but I can only give a VM up to 8 vCPU.

From other sources I read that I have a limit of 2 physical CPU in the free version.I see that the memory limitation is gone, which I am happy about.

Is there any document or anything which gives me actual limitations? It seems that VMware is hiding it a bit ;) , at least I coudln't use google efficently to gather the correct info here.

I am only interested in the Hardware limitations, not the limits for creating failovers etc.

Craig Watson3 Answers

Those two stats you mention are different; it's 8 virtualised CPUs per VM and 2 physical CPU sockets that they're talking about - not the same thing. BTW if you're going with the free version have a look at THIS great new ESXi add-in that gives you a vCenter-like interface into your host via a web client - it's really new and really useful :)

Chopper3Chopper3Free version Hypervisor (Esxi) Version 6.0

- 2 (physical) CPU limit

- No Ram limit (removed since 5.5)

Hypervisor Spec

- Number of cores per physical CPU: No limit

- Number of physical CPUs per host: No limit

- Number of logical CPUs per host: 480

- Maximum vCPUs per virtual machine: 8

When you apply the ESXi 6 free license, it appears :

Extracted from: http://www.sysadmit.com/2016/03/vmware-esxi-gratuito-limitaciones.html